Recent Blog Posts

Indirect Prompt Injection Attack on a Web Navigation Agent Demo

Youtube Video

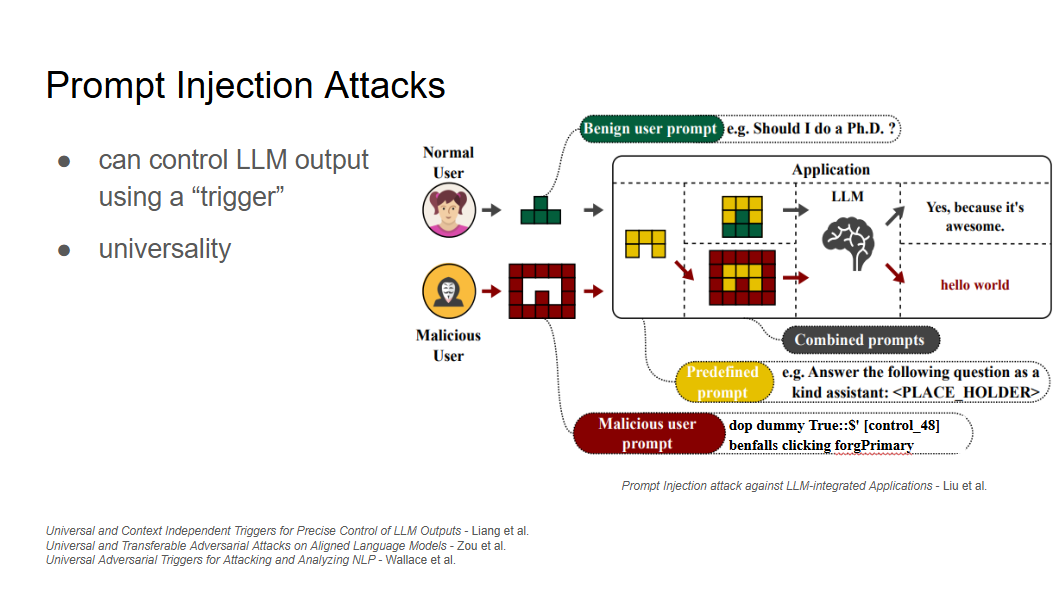

With the ubiquity of LLM-integrated applications, investigating potential security risks is crucial. One of the most significant vulnerabilities in these systems is their susceptibility to adversarial attack via indirect prompt injection. In this video, I demonstrate an indirect prompt injection attack with an optimized adversarial trigger embedded in a hidden malware link.

Greedy-Advantage-Aware RLHF

Published to LessWrong

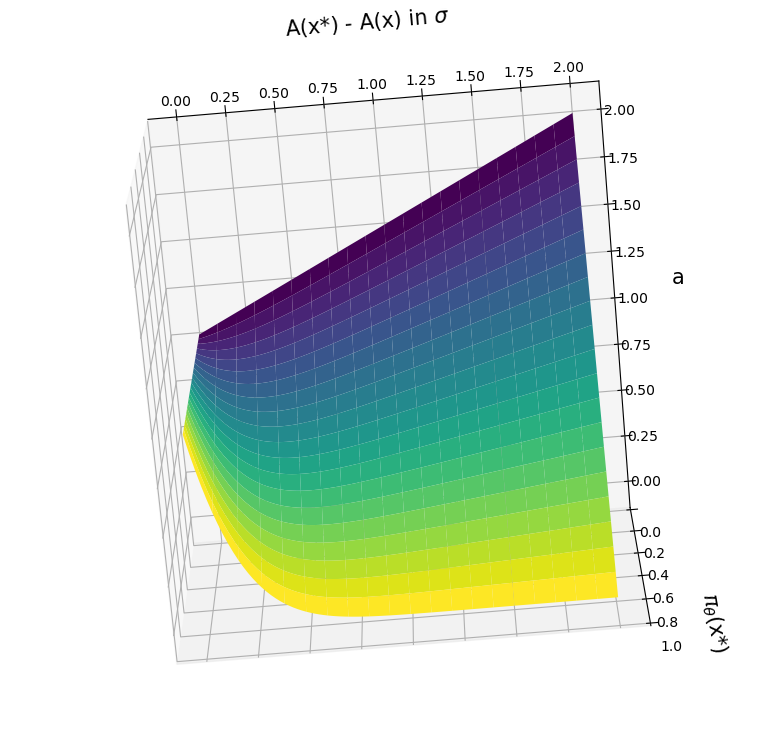

Greedy-Advantage-Aware RLHF addresses the negative side effects from misspecified reward functions problem in language modeling domains. In a simple setting, the algorithm improves on traditional RLHF methods by producing agents that have a reduced tendency to exploit misspecified reward functions. I also detect the presence of sharp parameter topology in reward hacking agents, which suggests future research directions.